KOVENS'19 - The German-focused NLP Conference

KONVENS is an annual conference, which gathers together the computer scientist and computational linguist community working with the German language.

This year’s edition of KONVENS was held in the city of Erlangen, Germany, at the Friedrich Ebert University. The conference lasted for 4 days. The first day was reserved for tutorials and workshops, while the following days had presentations and poster sessions, coffee breaks and networking opportunities in between to motivate communication, keep up the good mood, and get to know each other.

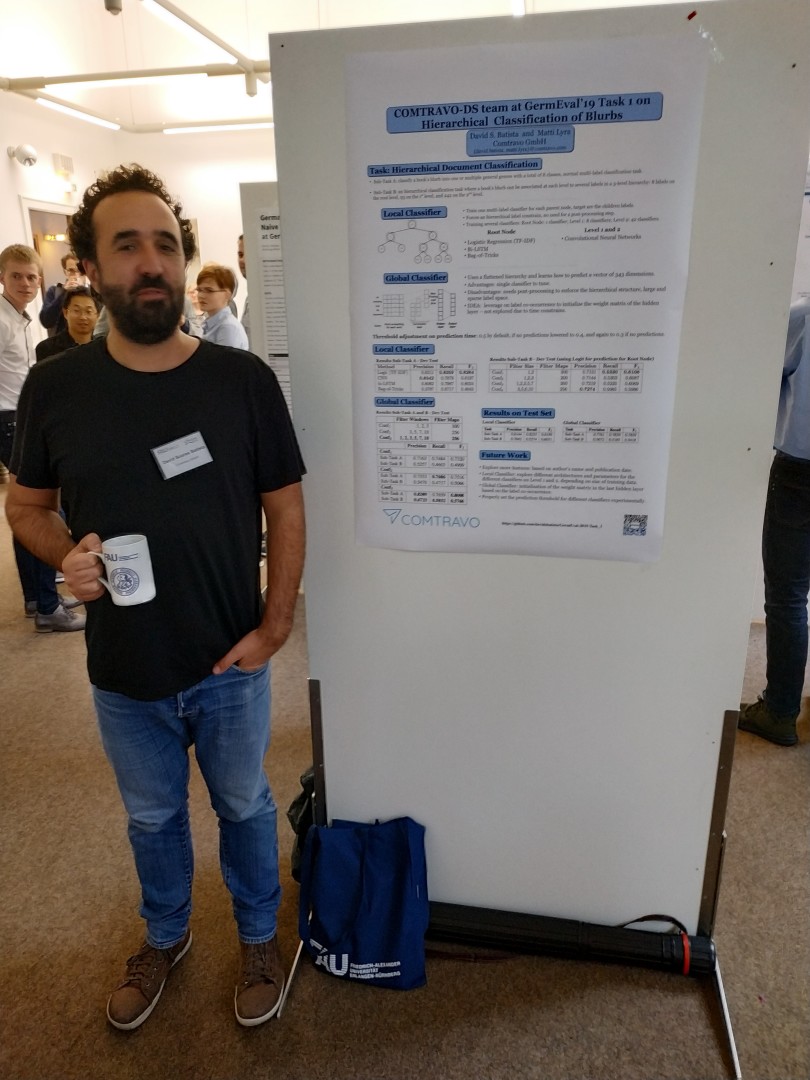

We participated in one of the shared tasks for this year’s conference and presented a poster at the conference.

Workshop

This year’s workshops included a data challenge, GermEval, consisting of two shared tasks, in the vein of SemEval but focusing on German NLP tasks. The two proposed tasks were:

- GermEval Task 1, 2019 — Shared Task on Hierarchical Classification of Blurbs

- Germeval Task 2, 2019 — Shared Task on the Identification of Offensive Language

GermEval: Hierarchical Classification of Blurbs

The Comtravo data science team participated in the Hierarchical Classification task. The challenge consisted of a hierarchical document classification task where the hierarchical structure of each document’s label needs to be captured. The documents were short descriptions of books, and one needed to classify them into many possible categories.

The task was further divided into two sub-tasks, one targeting only the first level of the hierarchy (Sub-Task A), and the second targeting the second and third levels (Sub-Task B). In our approach to the task, we explored two different strategies.

The first one was based on a more classic machine learning approach, a logistic regression classifier with TF-IDF weighted vectors. The second approach was based on Convolutional Neural Networks for text classification.

Our submissions achieved 13th place out of 19 submissions for Sub-Task A and the 11th place out of 19 submissions for Sub-Task B. You can read the paper with description of our approach: COMTRAVO-DS team at GermEval 2019 Task 1 on Hierarchical Classification of Blurbs and the code is publicly available.

We still had many extensions and ideas to add to these two baselines that were worth exploring, but due to time constraints, we only submitted the baseline systems for other ideas worth exploring.

One curious aspect of both winning solutions for this challenge was that they used totally different approaches for the task. One was based on state-of-the-art neural networks for seq2seq tasks, and the other one was based on SVM and TF-IDF weighted vectors for text representation.

You can read the papers of both best winners here:

- Multi-Label Multi-Class Hierarchical Classification using Convolutional Seq2Seq

- TwistBytes - Hierarchical Classification at GermEval 2019: walking the fine line (of recall and precision)

GermEval: Identification of Offensive Language

The second shared task attracted much more participants and dealt with the classification of German tweets. The task contained 3 sub-tasks, a coarse-grained binary classification task (i.e., is the tweet offensive or not offensive); a fine-grained multi-class classification task (i.e., insult, abuse, profanity); and a final classification as to whether or not the offensive tweet is implicit or explicit. The winning solution for most of the subtasks was based on BERT:

Main Conference

The days after the workshop were dedicated to several topics in the field of NLP applied to German. Having a mixed community of computer scientists and computational linguists results in a diverse type of research work. I will briefly list some of the papers that caught my attention.

The BERT approach that was applied to many tasks was present in some of the works that were presented: Wiedemann et al. carried out a long study on how different contextualised word embeddings perform on the task of Word Sense Disambiguation, Does BERT Make Any Sense? Interpretable Word Sense Disambiguation with Contextualised Embeddings.

BERT was also used for the named entity recognition task for historic German texts, BERT for Named Entity Recognition in Contemporary and Historic German Kai Labusch, Clemens Neudecker and David Zellhöfer.

Ortmann et al. evaluated several out-of-the-box NLP software packages for processing German on several distinct tasks, such as tokenisation, part-of-speech tagging, lemmatisation, and dependency parsing, Evaluating Off-the-Shelf NLP Tools for German

Koppen et al., presented a paper on Label Propagation of Polarity Lexica on Word Vectors, an automatic method to expand sentiment lexicons for tasks such as sentiment analysis.

There was also research done on a more linguistic-oriented perspective, which I found interesting. Metaphor detection for German Poetry analyses a manually created dataset of adjective-noun pairs from expressionist poems, annotated with metaphoricity and a method to detect metaphors in poems.

Summary

Although the conference is small compared to other broader academic NLP conferences such as EMNLP or ACL, I believe KONVENS is the right place to discuss and follow the research on NLP applied to German. Looking back at the conference throughout the last few years, it has definitely grown.

The data challenge workshops are also an interesting playground to apply and explore new methods and provide open data for the community.

I’m looking forward to the next edition, which will take place in Switzerland, co-located with SwissText.

KOVENS conference data-challenge